Imagine a scenario where somebody transfers USD 81 trillion by mistake.

This isn’t fiction.

This really happened at CitiGroup in 2025.

Their staff failed to delete pre-populated zeros in the backup system.

The transaction was flagged and reversed within 90 minutes.

But 90 minutes is all it took to nearly collapse a financial empire.

This wasn’t a sophisticated cyberattack. This was a data quality failure.

Harvard Business Review states that only 3% of companies’ data meets basic quality standards.

And this costs companies dearly.

According to Forbes, USD 12 million in annual revenue is drained per company due to poor quality data.

If you’re a CDO or a CTO, then you need a data quality management framework. Not for the next quarter but now.

Today, I’ll walk you through what is a data quality management framework, the core components of a data quality governance framework, and exactly how to build a data quality management system that protects your organization from catastrophic failures.

Let’s start.

What is a data quality management framework?

A data quality management framework is your systemic approach to ensuring data accuracy, completeness, and reliability.

It’s your defence system against bad data.’

The framework defines how you measure data quality, who owns data quality, what processes catch errors, and when the validation happens.

Without a framework, you’re fighting fires. With one, you’re preventing them.

What is total data quality management? It’s a comprehensive approach that embeds quality into every stage of your data lifecycle.

From collection to consumption and ingestion to insights.

64% of organizations cite data quality as their top data integrity challenge. Yet most lack a systematic framework to address it.

Your data quality management framework must address the six core dimensions:

- Accuracy – Is the data correct and error-free?

- Completeness – Are all required fields populated?

- Consistency – Does data match across systems?

- Timeliness – Is data current enough for its use?

- Validity – Does data conform to defined formats?

- Uniqueness – Are there duplicates corrupting your datasets?

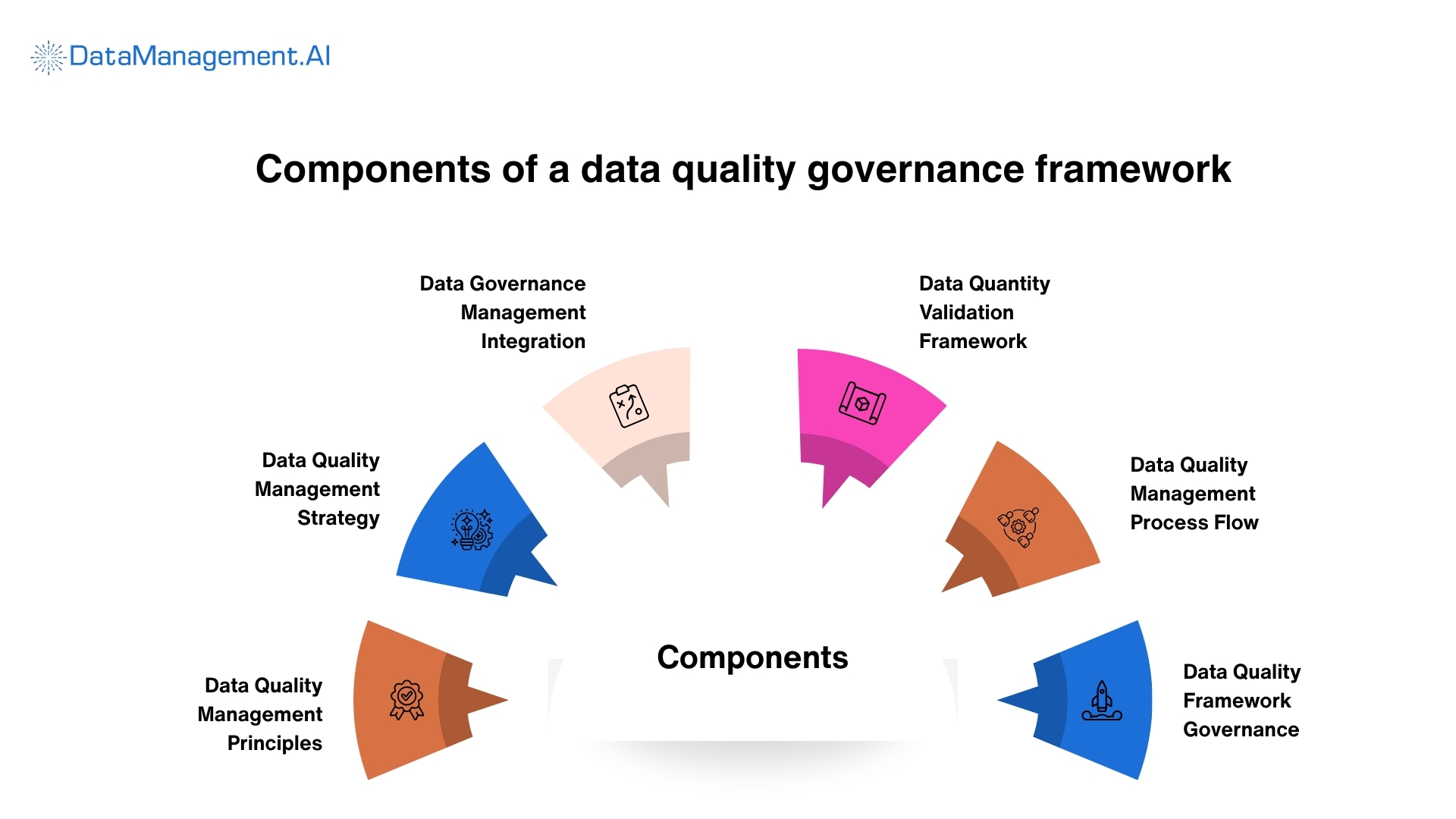

Critical components of a data quality governance framework

Building a data quality governance framework requires more than good intentions.

You need specific components working together systematically.

Let me break down the essentials.

Data Quality Management Principles

Your foundation starts with clear principles. These guide every decision.

THe ISO/IEC 25012 data quality model defines comprehensive standards. It covers accuracy, completeness, consistency, timeliness, and uniqueness.

Add to that contextual and representational abilities.

Your principles must be:

- Measurable – You can quantify them

- Enforceable Systems can validate them automatically

- Business-aligned – They connect to real outcomes

Data Quality Management Strategy

Connect data quality to revenue impact. Show executives how bad data costs 31% of revenue on average.

Your data quality management strategy should prioritize based on business impact. Focus first on data affecting:

- Customer experience

- Revenue generation

- Regulatory compliance

- Operational efficiency

Data Governance Management Integration

Data quality and governance aren’t separate. They’re interconnected.

62% of organizations identify data governance as their biggest AI development challenge.

Why?

Because AI requires trustworthy data.

Your data governance and data quality framework must define:

- Data ownership and stewardship

- Access controls and permissions

- Compliance requirements

- Audit trails and lineage

- Quality standards and metrics

Integration means governance policies automatically enforce quality rules. Quality metrics feed back into governance decisions.

Data Quantity Validation Framework

Validation is where theory meets reality.

Your data quality validation framework defines the rules and checks that enforce quality standards. How can I ensure data quality during the ingestion process? Through the following multi-layered validations:

- Schema validation – Does incoming data match expected structure?

- Format validation – Are data formats, currencies, and identifiers correct?

- Range validation – Do numeric values fall within acceptable ranges?

- Referential integrity – Do foreign keys reference valid records?

- Business rule validation – Does data meet domain-specific requirements?

Validation isn’t optional. And temporal semantics matter.

Data Quality Management Process Flow

Your process flow defines when and where quality checks happen.

The data quality management process should include:

- Ingestion Stage – Validate data as it enters your systems. Reject or quarantine data that doesn’t meet standards.

- Transformation Stage – Monitor data quality as it moves through pipelines. Track how transformations affect quality metrics.

- Storage Stage – Continuously profile data at rest. Detects drift, decay, and corruption.

- Consumption Stage – Validate data before it feeds reports, dashboards, or models.

Your data quality management process flow must enable real-time detection. Automated alerts and immediate quarantine of bad data.

Data Quality Framework Governance

The connection between your data quality framework and data governance components is critical.

Governance without quality checks is toothless. Quality checks without governance context are meaningless.

Your integrated approach should:

- Embed quality rules in governance policies

- Use quality metrics in governance dashboards

- Trigger governance workflows based on quality thresholds

- Maintain audit trails linking quality incidents to governance decisions

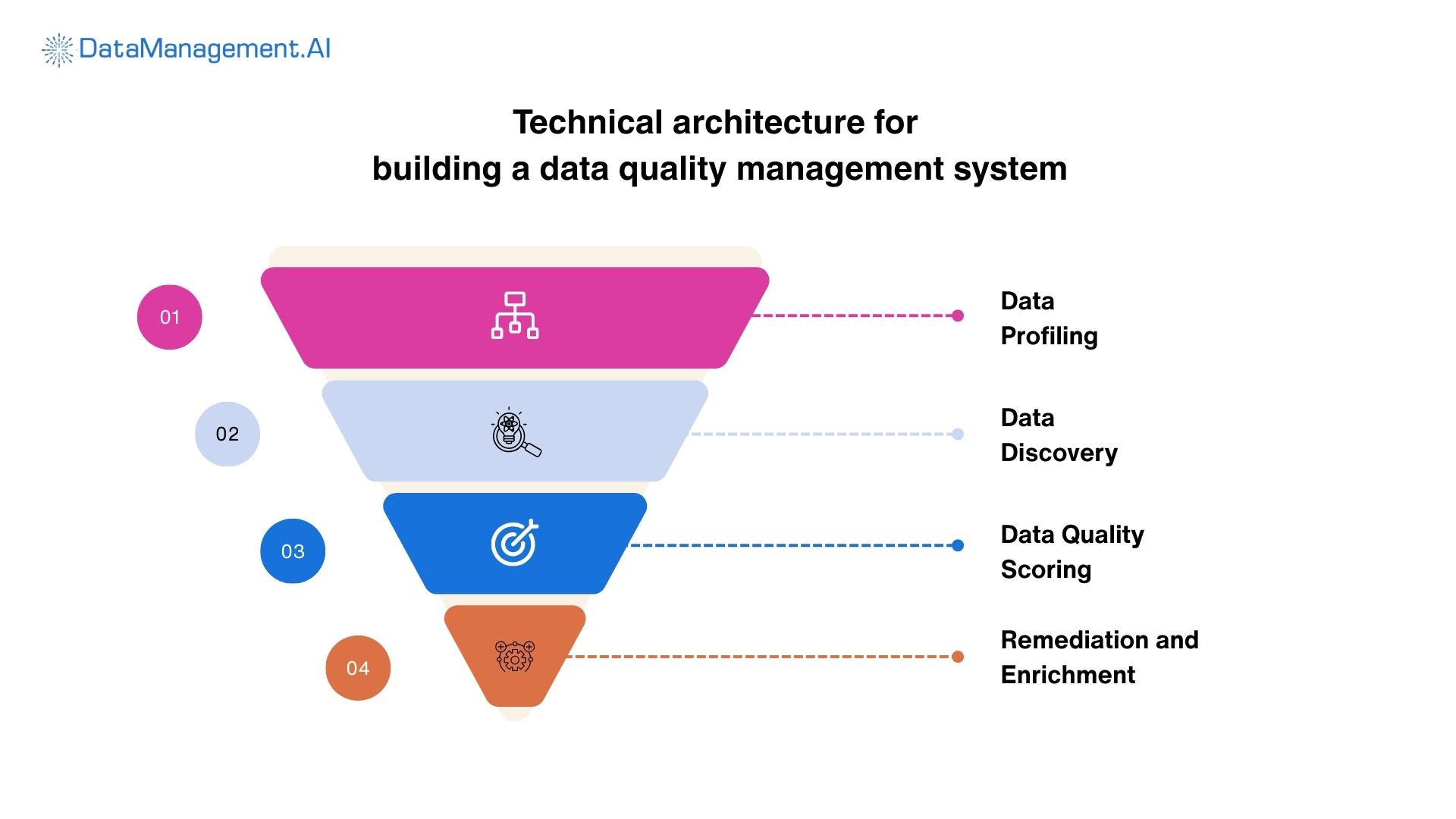

Technical architecture for building your data quality management system

Time to get technical.

What does a functioning data quality management system actually look like?

The architecture layers consist of:

Data Profiling and Discovery

Your system must automatically profile incoming data, identify patterns, detect anomalies, and understand distributions.

Tools use machine learning to baseline normal data behaviour. Deviations trigger alerts.

Quality Rules Engine

This layer enforces your validation rules. It evaluates data against defined standards in real-time.

Modern rules engines support:

- Declarative rule definitions

- Real-time evaluation

- Automatic rule learning from patterns

- Context-aware validation

Monitoring and Observability

Leading data observability platforms assess data across your ecosystem from a single dashboard. They enable:

- Automated monitoring

- Root cause analysis

- Data lineage tracking

- SLA tracking

- Real-time anomaly alerts

Data Quality Scoring

Your system must quantify quality.

Assign scores to datasets, tables, and individual records.

Scoring helps prioritize remediation efforts.

Focus on high-impact and low-quantity data first.

Remediation and Enrichment

The system should support both automated and manual remediation.

Clean, standardize, enrich, and deduplicate data.

Procter & Gamble’s Fragmented Data Quality Woes

A powerful real-world example of adopting a data quality management framework is that of Procter & Gamble (P&G).

They faced massive operational inefficiencies due to fragmented and inconsistent data across all their business units.

With close to 48 different SAP instances and billions of records, P&G struggled with master data that was siloed.

P&G addressed this by implementing a centralized data quality management framework focused on MDM, that established a single source of truth for their customer and product information.

The adoption process involved shifting from manual reactive data cleansing to an automated governance-led approach.

Prior to this, P&G analysts spent hours manually downloading data to resolve discrepancies in spreadsheets.

A standardized framework established clear data quality dimensions, such as, accuracy, completeness, and consistency.

The result was enormous.

Reduced operational risks and improved productivity were supported with automated data quality checks. These led to minimized errors and data leakage.

The data quality management framework didn’t just fix records, it enabled a more agile decision-making and efficient supply chain.

Your complete data quality management framework solution

After being technical, let’s get a bit practical.

You understand the data quality management process.

You know the components. You see the architecture.

But building this from scratch? That’s 12-18 months of development. Millions in investment. And no guarantee it’ll work.

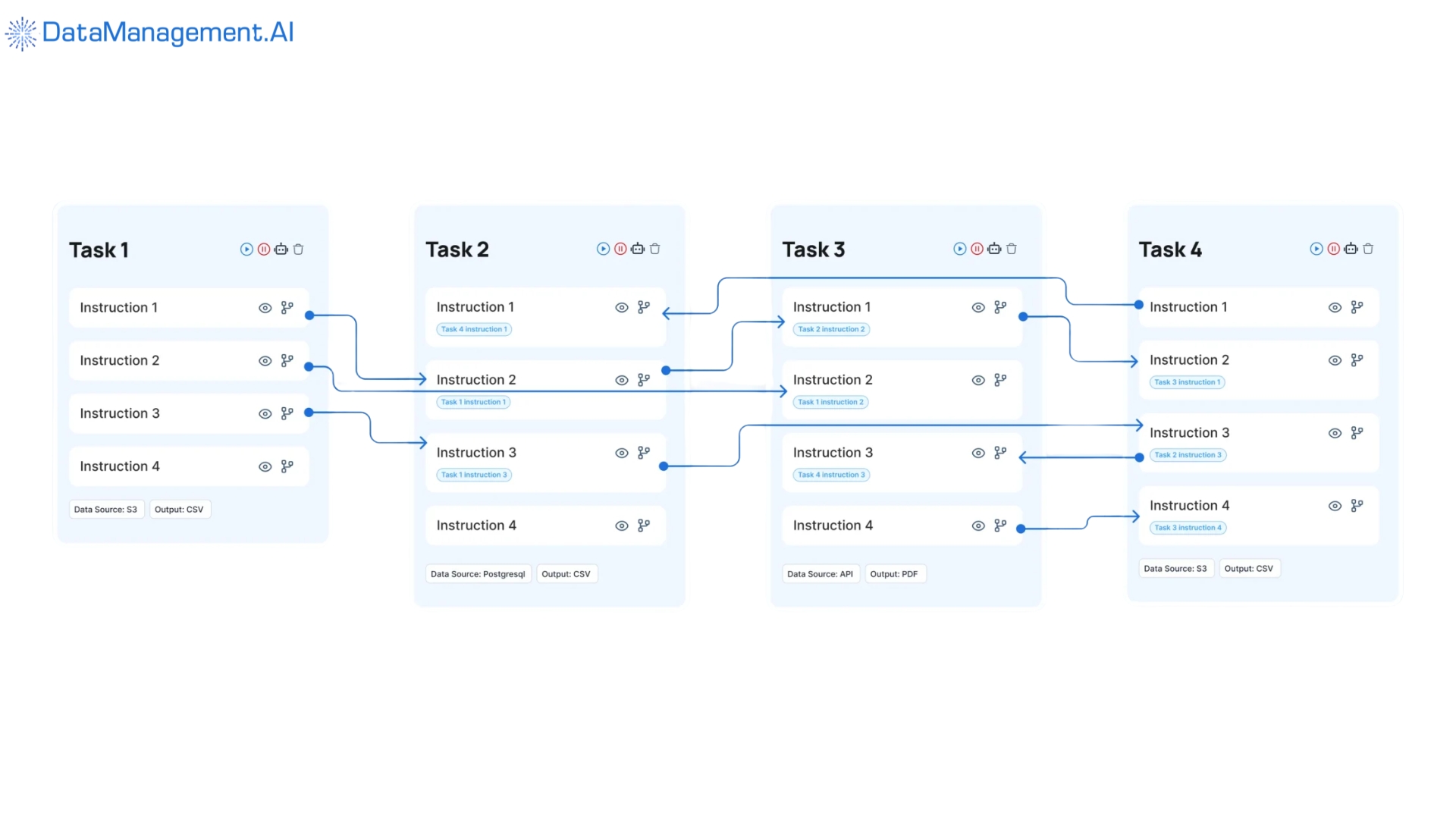

DataManagement.AI delivers a complete data quality management framework out of the box.

Our AI-powered agents automate every layer of your quality framework:

- Profile AI continuously analyzes your data landscape. It identifies patterns, anomalies, and quality issues automatically. No manual profiling needed. No blind spots.

- Quality AI implements your validation rules across all data. Real-time checks. Automated enforcement. Immediate alerts when quality thresholds breach.

- Cleanse AI intelligently fixes data quality issues. It detects duplicates, standardizes formats, corrects errors. Your data becomes reliable without manual intervention.

- Validate AI ensures data quality during the ingestion process. Multiple validation layers. Automatic quarantine of bad data. Complete audit trails.

- Metadata AI maintains comprehensive data lineage. You see exactly where data comes from. How it transforms. Where it goes. Complete transparency.

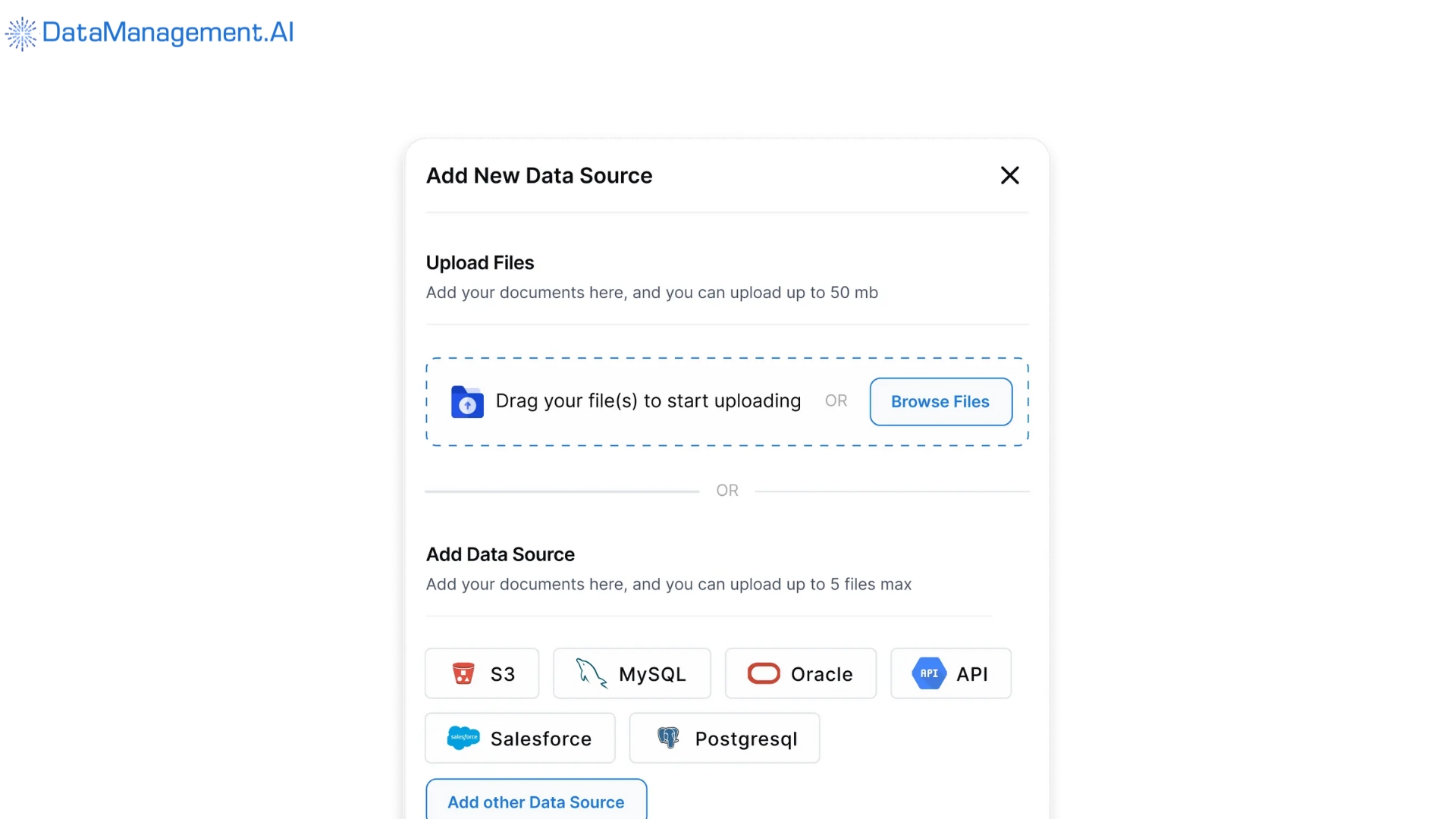

The DataManagement.AI platform delivers your complete data quality management system through an intuitive visual interface.

This visual interface allows you to control all aspects of data quality management.

As seen in the image above, you can easily drag-and-drop your required data sources.

Design complex validation pipelines in minutes. No coding required.

Organizations using DataManagement.AI report:

- 60% more efficiency in data operations

- Over 50% cost savings in data management

- Real-time detection of quality issues (minutes, not hours)

Our Chain-of-Data approach connects every component of your data quality governance framework, ingestion validation, pipeline monitoring, storage profiling, and consumption verification.

You get end-to-end lineage automatically.

Complete audit trails and compliance by design.

This isn’t just tooling. It’s your complete framework delivered as intelligent automation.

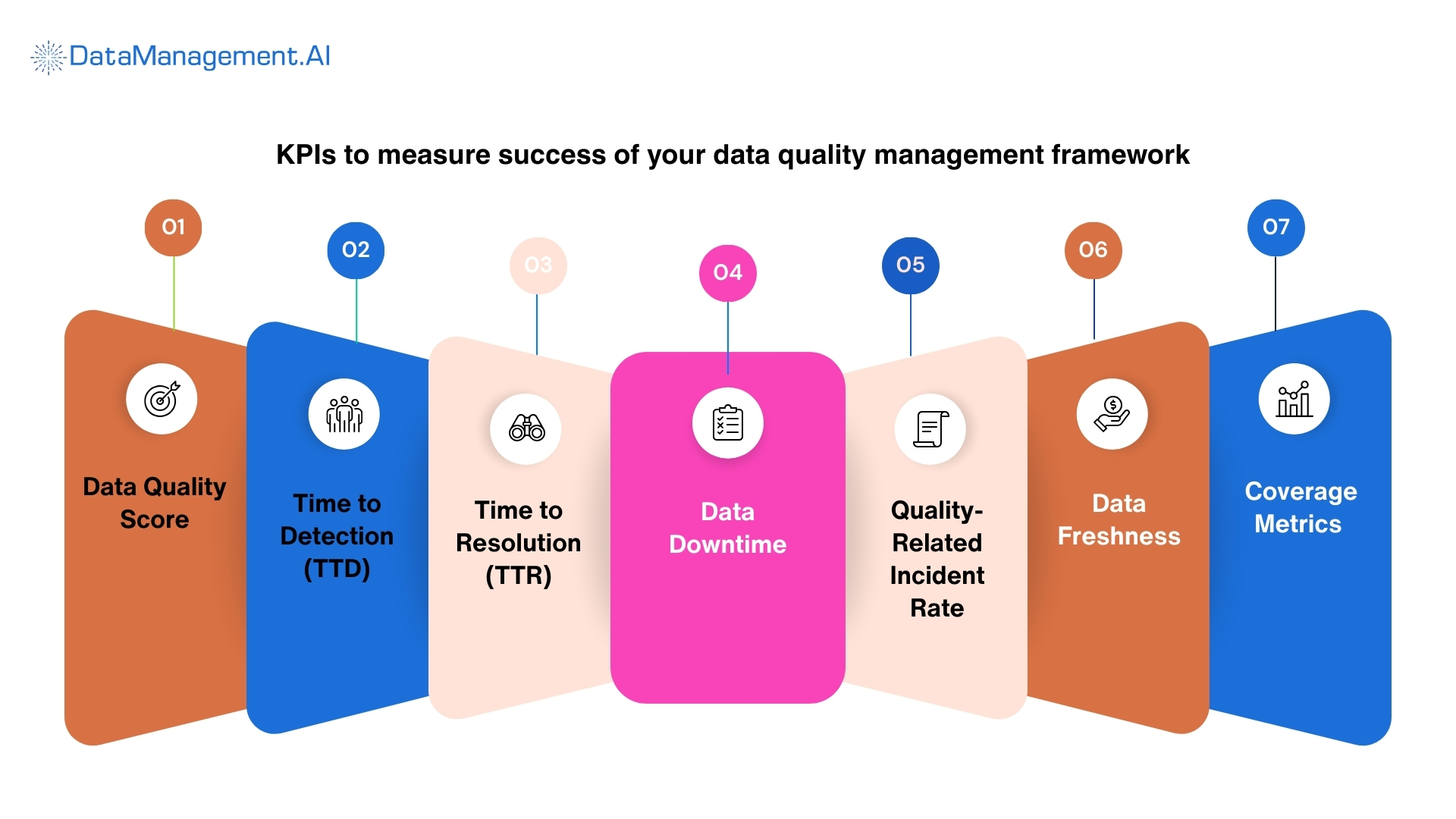

KPIs to measure success of your data quality management framework

You need concrete metrics to prove your framework works.

Here are the data quality management KPIs that matter to you:

- Data Quality Score – Percentage of data meeting all quality standards. Target should be 95%+ for critical datasets.

- Time to Detection (TTD) – How quickly you identify quality issues. Elite teams go for under 30 minutes. Industry average is 4+ hours.

- Time to Resolution (TTR) – How fast you fix problems. Your goal is to reduce by 50% year-over-year.

- Data Downtime –Total time data is unavailable or unreliable. Measure in hours per month and drive toward zero.

- Quality-Related Incident Rate – Number of incidents per month. Track trends and show continuous improvement.

- Data Freshness – Age of data relative to requirements. Real-time businesses need second-level freshness.

- Coverage Metrics – Percentage of data assets with quality monitoring. Target should be 100% of critical data.

Your data quality management framework in a nutshell

Poor data quality costs USD 12.9 million per organization.

But comprehensive frameworks deliver measurable results.

You need:

Clear data quality management principles aligned to business outcomes

- Integrated data governance and data quality framework

- Robust data quality validation framework with multi-layer checks

- Automated data quality management process flow

- Complete data quality management system with observability

- Practical data quality management plan template for implementation

DataManagement.AI delivers all of this through intelligent automation. Our AI agents implement your complete framework.

You get the full data quality governance framework risk management demands. Plus, enterprise data quality batch processing that scales.

You also get real-time validation ensuring data quality during the ingestion process.

Your competitors are implementing frameworks now.

They’re protecting revenue, avoiding fines, and enabling AI initiatives.

Every day your wait costs money.

Every incident risks reputation and every bad decision you make is based on poor data sets you back.

Schedule a demo with us to see how intelligent agents automate your complete data quality management framework. Transform your data from a liability to a strategic asset.

Frequently Asked Questions (FAQs) about Data Quality Management Framework

The following are some commonly asked questions about data quality management framework and their related terminologies.

Q.1 What is the first step in my data quality management strategy?

A. We begin by defining your business goals. A strong data quality management strategy aligns your technical rules with your high-level business objectives. This ensures that every piece of information you collect actually serves a purpose for your team’s decision-making.

Q.2 How can I ensure data quality during the ingestion process?

A. To keep your pipeline clean, you should implement a data quality validation framework at every entry point. By using specific data quality management techniques, like schema validation and null checks, you can prevent garbage data from entering your systems.

Q.3 What are the core data quality management principles I should know of?

A. DataManagement.AI prioritizes transparency, accountability, and continuous improvement. By sticking to these data quality management principles, you ensure that data isn’t just fixed once, but stays reliable through a repeatable and governed data quality management process.

Q.4 How do I distinguish between data governance and data quality framework?

A. While they overlap, a data quality framework data govern approach treats governance as the rules and quality as the result. Your governance data quality framework sets the policies, while the DQM tools enforce them across your ecosystem.

Q.5 Is there a standard data quality management plan template I can use?

A. Yes. DataManagement.AI lets you start with a data quality management plan template that outlines roles, dimensions, and reporting frequencies. This helps you build a consistent data quality management plan tailored specifically to your organization’s unique data landscape.

Q.6 What does a typical data quality management process flow look like?

A. Your data quality management process flow usually follows a cycle that includes discover, define, measure, analyze, and improve. This circular data quality management process ensures you are constantly monitoring for new anomalies as your data evolves.

Q.7 How does a master data quality management framework help me?

A. When you have multiple departments, a master data quality management framework creates a singular source of truth. DataManagement.AI syncs your core entities like product lists, across your enterprise data quality batch processing systems to prevent costly duplicates.

Q.8 What is total data quality management (TDQM)?

A. Total data quality management is an approach where we treat data like a manufactured product. By integrating a total data quality management philosophy, you can involve everyone from engineers to end users, in maintaining high standards throughout your data lifecycle.

Q.9 How do I handle large-scale data moves?

A. For massive migrations, we use enterprise data quality batch processing for data integration. This allows us to scrub and validate millions of records at once. This ensures your data quality management system remains robust even during high-volume transfers.

Q.10 How does data quality governance framework risk management protect me?

A. By linking your data governance and data quality framework to data quality governance framework risk management, DataManagement.AI identifies toxic data that could lead you to compliance fines. We protect you by flagging sensitive or inaccurate information before it becomes a liability.